Mẹo Which Windows 10 feature provides an automated diagnosis and repair of boot problems plus a centralized platform for advanced recovery tool?

Thủ Thuật về Which Windows 10 feature provides an automated diagnosis and repair of boot problems plus a centralized platform for advanced recovery tool? Mới Nhất

Gan Feng Du đang tìm kiếm từ khóa Which Windows 10 feature provides an automated diagnosis and repair of boot problems plus a centralized platform for advanced recovery tool? được Cập Nhật vào lúc : 2022-08-20 08:56:02 . Với phương châm chia sẻ Bí kíp về trong nội dung bài viết một cách Chi Tiết 2022. Nếu sau khi Read Post vẫn ko hiểu thì hoàn toàn có thể lại Comment ở cuối bài để Ad lý giải và hướng dẫn lại nha.Red Hat Enterprise Linux 8

Nội dung chính- A guide to configuring basic system settings in Red Hat Enterprise Linux 8Making open source more inclusiveProviding feedback on Red Hat

documentationChapter 1. Getting started with RHEL System Roles1.1. Introduction

to RHEL System Roles1.2. RHEL System Roles terminology1.3. Applying a role1.4. Additional resourcesChapter 2. Changing basic environment settings2.1. Configuring the date and time2.1.1. Displaying the current date and time2.2. Configuring the system locale2.3. Configuring the keyboard layout2.4. Changing the language using desktop GUI2.5. Additional resourcesChapter 3. Configuring and managing network access3.1. Configuring the network and host name in

the graphical installation mode3.2. Configuring a static

Ethernet connection using nmcli3.3. Adding

a connection profile using nmtui3.4. Managing networking in the RHEL web console3.5. Managing networking using RHEL System Roles3.6. Additional resourcesChapter 4. Registering

the system and managing subscriptions4.1. Registering the system after the installation4.2. Registering

subscriptions with credentials in the web console4.3. Registering a system using Red Hat account on GNOME4.4. Registering a system using an activation key on GNOME4.5. Registering RHEL 8.4 using the installer GUIChapter 5. Making systemd services start boot time5.1. Enabling or disabling the services5.2. Managing services in the RHEL web consoleChapter 6. Configuring system security6.1. Enabling

the firewalld service6.2. Managing firewall in the rhel 8 web console6.3. Managing basic SELinux settings6.4. Ensuring the required state of selinux6.5. Switching SELinux modes in the RHEL 8 web console6.6. Additional resourcesChapter 7. Getting started with managing user accounts7.1. Managing accounts and groups using command line tools7.2. System user

accounts managed in the web console7.3. Adding new accounts using the web consoleChapter 8. Dumping a crashed kernel for later analysis8.1. What is kdump8.2. Configuring kdump memory usage and target location in web console8.3. kdump using RHEL System Roles8.4. Additional resourcesChapter 9. Recovering and restoring a system9.1. Setting up ReaR9.2. Using a ReaR rescue image on the 64-bit IBM Z architectureChapter 10. Troubleshooting problems using log files10.1. Services handling syslog messages10.2. Subdirectories storing syslog messages10.3. Inspecting log files using the web console10.4. Viewing logs using the command line10.5. Additional resourcesChapter 11. Accessing the Red Hat support11.1. Obtaining Red Hat support through Red Hat Customer Portal11.2. Troubleshooting problems using sosreportChapter 12. Managing software packages12.1. Software management tools in RHEL 812.2. Application streams12.3. Searching for software packages12.3.1. Searching packages with YUM12.3.2. Listing packages with YUM12.3.3. Listing repositories with YUM12.3.4. Displaying package information with YUM12.3.5. Listing package groups with YUM12.3.6. Specifying global

expressions in YUM input12.4. Installing software packages12.4.1. Installing packages with YUM12.4.2. Installing a package

group with YUM12.4.3. Specifying a package name in YUM input12.5. Updating software packages12.5.1. Checking for updates with YUM12.5.2. Updating a single package with YUM12.5.3. Updating a package group with YUM12.5.4. Updating all packages and their dependencies with YUM12.5.6. Automating software updates12.6. Uninstalling software packages12.6.1. Removing packages with YUM12.6.2. Removing a package group with YUM12.6.3. Specifying a package name in YUM input12.7. Managing software package groups12.7.1. Listing

package groups with YUM12.7.2. Installing a package

group with YUM12.7.3. Removing a package group with YUM12.7.4. Specifying global expressions in YUM input12.8. Handling package management history12.8.1. Listing transactions with YUM12.8.2. Reverting transactions with YUM12.8.3. Repeating transactions with YUM12.8.4. Specifying global expressions in YUM input12.9. Managing software repositories12.9.1. Setting YUM repository options12.9.2. Adding a YUM repository12.9.3. Enabling a YUM repository12.9.4. Disabling a YUM repository12.10. Configuring YUM12.10.1. Viewing the current YUM configurations12.10.2. Setting YUM main options12.10.3. Using YUM plug-insChapter 13. Introduction to systemd13.1. systemd unit types13.2. systemd main features13.3. Compatibility

changes13.4. Additional resourcesChapter 14. Managing system services with systemctl14.1. Service unit management with systemctl14.2. Comparison of a service utility with

systemctl14.3. Listing system services14.4. Displaying system service

status14.5. Positive and negative service dependencies14.6. Starting a system service14.7. Stopping a system service14.8. Restarting a system service14.9. Enabling a system

service14.10. Disabling a system serviceChapter 15. Working with systemd targets15.1. Difference between SysV runlevels and systemd targets15.2. Viewing the default target15.2.1. Changing the default target15.2.2. Changing the default target using symbolic link15.2.3. Changing the current targetChapter 16. Shutting down, suspending, and hibernating the system16.1. System shutdown16.2. Shutting down the system using the shutdown command16.3. Shutting down the

system using the systemctl command16.4. Restarting the system16.5. Suspending the system16.6. Hibernating the system16.7. Overview of the power management commands with

systemctlChapter 17. Working with systemd unit files17.1. Introduction to unit files17.2. Unit file structure17.3. Important [Unit] section options17.4. Important [Service] section options17.5. Important [Install] section options17.6. Creating custom unit files17.7. Creating a custom unit file by using the second instance of the sshd service17.8. Converting SysV init scripts to

unit files17.9. Finding the systemd service description17.10. Finding the systemd service dependencies17.11. Finding default targets of the service17.12. Finding files used by the service17.13. Modifying existing unit files17.14. Extending the default unit configuration17.15. Overriding the default unit configuration17.16. Changing the timeout limit17.17. Monitoring overridden units17.18. Working with instantiated units17.19. Important unit specifiers17.20. Additional resourcesChapter 18. Optimizing systemd to shorten the boot time18.1. Examining system boot performance18.2. A guide to selecting services that can be safely disabled18.3. Additional resourcesChapter 19. Introduction to managing user and group accounts19.1. Introduction to users and groups19.2. Configuring reserved user and group IDs19.3. User private groupsChapter 20. Managing user accounts in the web console20.1. System user accounts managed in the web console20.2. Adding new accounts using the web console20.3. Enforcing password expiration in the web console20.4. Terminating user sessions in the web consoleChapter 21. Managing users from the command line21.1. Adding

a new user from the command line21.2. Adding a new group from the command line21.3. Adding a user to a supplementary group from the command line21.4. Creating a group directoryChapter 22. Editing user groups using the command line22.1. Primary and supplementary user groups22.2. Listing the primary and supplementary groups of a user22.3. Changing the primary group of a user22.4. Adding a user to a supplementary group from the command line22.5. Removing a user from a supplementary group22.6. Changing all of the supplementary groups of a userChapter 23. Managing sudo access23.1. User authorizations in sudoers23.2. Granting sudo access to a user23.3. Enabling unprivileged users to run certain commands23.4. Additional

resourcesChapter 24. Changing and resetting the root password24.1. Changing the root password as the root user24.2. Changing or resetting the forgotten root password as a non-root user24.3. Resetting the root password on bootChapter 25. Managing

file permissions25.1. Base file permissions25.2. User file-creation mode mask25.3. Default file permissions25.4. Changing file permissions using symbolic values25.5. Changing file permissions using octal valuesChapter 26. Managing the umask26.1. Displaying the current value of the umask26.2. Displaying the default bash umask26.3. Setting the umask using symbolic values26.4. Setting the umask using octal values26.5. Changing the default umask for the non-login shell26.6. Changing the default umask for the login shell26.7. Changing

the default umask for a specific user26.8. Setting default permissions

for newly created home directoriesChapter 27. Using

dnstap in RHEL27.1. Recording DNS queries using dnstap in RHELChapter 28. Managing the Access Control List28.1. Displaying the current Access Control List28.2. Setting the Access Control ListChapter 29. Using the Chrony suite to configure NTP29.1. Introduction to chrony suite29.2. Using chronyc to control chronyd29.3. Migrating to chrony29.3.1. Migration scriptChapter 30. Using Chrony30.1. Managing chrony30.2. Checking if chrony is synchronized30.3. Manually adjusting the System Clock30.4. Setting up chrony for a system in an isolated network30.5. Configuring remote monitoring access30.6. Managing

time synchronization using RHEL System Roles30.7. Additional resourcesChapter 31. Chrony with HW timestamping31.1. Verifying support for hardware

timestamping31.2. Enabling hardware timestamping31.3. Configuring

client polling interval31.4. Enabling interleaved mode31.5. Configuring server for large number of clients31.6. Verifying hardware timestamping31.7. Configuring PTP-NTP bridgeChapter 32. Achieving some settings previously supported by NTP in chrony32.1. Monitoring by ntpq and ntpdc32.2. Using authentication mechanism based on public key cryptography32.3. Using ephemeral symmetric associations32.4. multicast/broadcast clientChapter 33. Overview of Network Time Security (NTS) in chrony33.1. Enabling Network Time Security (NTS) in the client configuration file33.2. Enabling Network Time Security (NTS) on the serverChapter 34. Using secure communications between two systems with OpenSSH34.1. SSH and OpenSSH34.2. Configuring and starting an OpenSSH server34.3. Setting an OpenSSH server for key-based authentication34.4. Generating SSH key pairs34.5. Using SSH keys stored on a smart card34.6. Making OpenSSH more secure34.7. Connecting to a remote server using an SSH jump host34.8. Connecting to remote machines with SSH keys using ssh-agent34.9. Additional resourcesChapter 35. Configuring a remote logging

solution35.1. The Rsyslog logging service35.2. Installing

Rsyslog documentation35.3. Configuring a server for remote logging over TCP35.4. Configuring remote logging to a server over TCP35.5. Configuring TLS-encrypted remote logging35.6. Configuring

a server for receiving remote logging information over UDP35.7. Configuring remote logging to a server over UDP35.8. Load balancing helper in Rsyslog35.9. Configuring reliable remote logging35.10. Supported Rsyslog modules35.11. Additional resourcesChapter 36. Using the Logging

System Role36.1. The Logging System Role36.2. Logging System Role parameters36.3. Applying a local Logging System Role36.4. Filtering logs in a local Logging System Role36.5. Applying a remote logging solution using the Logging System Role36.6. Using the Logging System Role with TLS36.6.1. Configuring client logging with TLS36.6.2. Configuring server logging with TLS36.7. Using the Logging System Roles with RELP36.7.1. Configuring

client logging with RELP36.7.2. Configuring server logging with

RELP36.8. Additional resourcesChapter 37. Introduction to Python37.1. Python versionsChapter 38. Installing and using Python38.1. Installing Python 338.2. Installing additional Python 3 packages38.3. Installing additional Python 3 tools for developers38.4. Installing Python 238.5. Migrating from Python 2 to Python 338.6. Using PythonChapter 39. Configuring the unversioned Python39.1. Configuring the unversioned python command directly39.2. Configuring the unversioned python command to the required Python version interactively39.3. Additional resourcesChapter 40. Packaging

Python 3 RPMs40.1. SPEC file description for a Python package40.2. Common macros for Python 3 RPMs40.3. Automatic provides for Python RPMsChapter 41. Handling interpreter directives in Python scripts41.1. Modifying interpreter directives in Python scripts41.2. Changing /usr/bin/python3 interpreter directives in your custom packagesChapter 42. Using the PHP scripting language42.1. Installing the PHP scripting language42.2. Using the PHP scripting language with a web server42.2.1. Using PHP with the Apache HTTP Server42.2.2. Using PHP with the nginx web server42.3. Running a PHP script using the command-line interface42.4. Additional resourcesChapter 43. Using langpacks43.1. Checking languages that provide langpacks43.2. Working with RPM weak dependency-based langpacks43.2.1. Listing already installed language support43.2.2. Checking the availability of language support43.2.3. Listing packages installed for a language43.2.4. Installing language

support43.2.5. Removing language support43.3. Saving disk space by using glibc-langpack-

A guide to configuring basic system settings in Red Hat Enterprise Linux 8

Abstract

This document describes basics of system administration on Red Hat Enterprise Linux 8. The title focuses on: basic tasks that a system administrator needs to do just after the operating system has been successfully installed, installing software with yum, using systemd for service management, managing users, groups and file permissions, using chrony to configure NTP, working with Python 3 and others.

Making open source more inclusive

Red Hat is committed to replacing problematic language in our code, documentation, and web properties. We are beginning with these four terms: master, slave, blacklist, and whitelist. Because of the enormity of this endeavor, these changes will be implemented gradually over several upcoming releases. For more details, see our CTO Chris Wright’s message.

Providing feedback on Red Hat documentation

We appreciate your input on our documentation. Please let us know how we could make it better.

For simple comments on specific passages:

Make sure you are viewing the documentation in the Multi-page HTML format. In addition, ensure you see the Feedback button in the upper right corner of the document. Use your mouse cursor to highlight the part of text that you want to comment on. Click the Add Feedback pop-up that appears below the highlighted text. Follow the displayed instructions.For submitting feedback via Bugzilla, create a new ticket:

Go to the Bugzilla website. As the Component, use Documentation. Fill in the Description field with your suggestion for improvement. Include a link to the relevant part(s) of documentation. Click Submit Bug.Chapter 1. Getting started with RHEL System Roles

This section explains what RHEL System Roles are. Additionally, it describes how to apply a particular role through an Ansible playbook to perform various system administration tasks.

1.1. Introduction to RHEL System Roles

RHEL System Roles is a collection of Ansible roles and modules. RHEL System Roles provide a configuration interface to remotely manage multiple RHEL systems. The interface enables managing system configurations across multiple versions of RHEL, as well as adopting new major releases.

On Red Hat Enterprise Linux 8, the interface currently consists of the following roles:

-

Certificate Issuance and Renewal Cockpit Firewalld HA Cluster Kernel Dumps Kernel Settings Logging Metrics (PCP) Microsoft SQL Server Networking Network Bound Disk Encryption client and Network Bound Disk Encryption server Postfix SELinux SSH client SSH server Storage Terminal Session Recording Time Synchronization VPN

All these roles are provided by the rhel-system-roles package available in the AppStream repository.

1.2. RHEL System Roles terminology

You can find the following terms across this documentation:

Ansible playbook Playbooks are Ansible’s configuration, deployment, and orchestration language. They can describe a policy you want your remote systems to enforce, or a set of steps in a general IT process. Control node Any machine with Ansible installed. You can run commands and playbooks, invoking /usr/bin/ansible or /usr/bin/ansible-playbook, from any control node. You can use any computer that has Python installed on it as a control node - laptops, shared desktops, and servers can all run Ansible. However, you cannot use a Windows machine as a control node. You can have multiple control nodes. Inventory A list of managed nodes. An inventory file is also sometimes called a “hostfile”. Your inventory can specify information like IP address for each managed node. An inventory can also organize managed nodes, creating and nesting groups for easier scaling. To learn more about inventory, see the Working with Inventory section. Managed nodes The network devices, servers, or both that you manage with Ansible. Managed nodes are also sometimes called “hosts”. Ansible is not installed on managed nodes.

1.3. Applying a role

The following procedure describes how to apply a particular role.

Prerequisites

Ensure that the rhel-system-roles package is installed on the system that you want to use as a control node:

# yum install rhel-system-rolesInstall the Ansible Core package:

# yum install ansible-coreThe Ansible Core package provides the ansible-playbook CLI, the Ansible Vault functionality, and the basic modules and filters required by RHEL Ansible content.

Ensure that you are able to create an Ansible inventory.

Inventories represent the hosts, host groups, and some of the configuration parameters used by the Ansible playbooks.

Playbooks are typically human-readable, and are defined in ini, yaml, json, and other file formats.

Ensure that you are able to create an Ansible playbook.

Playbooks represent Ansible’s configuration, deployment, and orchestration language. By using playbooks, you can declare and manage configurations of remote machines, deploy multiple remote machines or orchestrate steps of any manual ordered process.

A playbook is a list of one or more plays. Every play can include Ansible variables, tasks, or roles.

Playbooks are human-readable, and are defined in the yaml format.

Procedure

Create the required Ansible inventory containing the hosts and groups that you want to manage. Here is an example using a file called inventory.ini of a group of hosts called webservers:

[webservers] host1 host2 host3Create an Ansible playbook including the required role. The following example shows how to use roles through the roles: option for a playbook:

The following example shows how to use roles through the roles: option for a given play:

--- - hosts: webservers roles: - rhel-system-roles.network - rhel-system-roles.timesyncEvery role includes a README file, which documents how to use the role and supported parameter values. You can also find an example playbook for a particular role under the documentation directory of the role. Such documentation directory is provided by default with the rhel-system-roles package, and can be found in the following location:

/usr/share/doc/rhel-system-roles/SUBSYSTEM/Replace SUBSYSTEM with the name of the required role, such as selinux, kdump, network, timesync, or storage.

To execute the playbook on specific hosts, you must perform one of the following:

Edit the playbook to use hosts: host1[,host2,…], or hosts: all, and execute the command:

# ansible-playbook name.of.the.playbookEdit the inventory to ensure that the hosts you want to use are defined in a group, and execute the command:

# ansible-playbook -i name.of.the.inventory name.of.the.playbookSpecify all hosts when executing the ansible-playbook command:

# ansible-playbook -i host1,host2,... name.of.the.playbookBe aware that the -i flag specifies the inventory of all hosts that are available. If you have multiple targeted hosts, but want to select a host against which you want to run the playbook, you can add a variable in the playbook to be able to select a host. For example:

Ansible Playbook | example-playbook.yml: - hosts: " target_host " roles: - rhel-system-roles.network - rhel-system-roles.timesyncPlaybook execution command:

# ansible-playbook -i host1,..hostn -e target_host=host5 example-playbook.yml1.4. Additional resources

- Red Hat Enterprise Linux (RHEL) System Roles Managing local storage using RHEL System Roles

Deploying the same SELinux configuration on multiple systems using RHEL System Roles

Chapter 2. Changing basic environment settings

Configuration of basic environment settings is a part of the installation process. The following sections guide you when you change them later. The basic configuration of the environment includes:

- Date and time System locales Keyboard layout Language

2.1. Configuring the date and time

Accurate timekeeping is important for a number of reasons. In Red Hat Enterprise Linux, timekeeping is ensured by the NTP protocol, which is implemented by a daemon running in user space. The user-space daemon updates the system clock running in the kernel. The system clock can keep time by using various clock sources.

Red Hat Enterprise Linux 8 uses the chronyd daemon to implement NTP. chronyd is available from the chrony package. For more information, see Using the chrony suite to configure NTP.

2.1.1. Displaying the current date and time

To display the current date and time, use either of these steps.

Procedure

Enter the date command:

$ date Mon Mar 30 16:02:59 CEST 2022To see more details, use the timedatectl command:

$ timedatectl Local time: Mon 2022-03-30 16:04:42 CEST Universal time: Mon 2022-03-30 14:04:42 UTC RTC time: Mon 2022-03-30 14:04:41 Time zone: Europe/Prague (CEST, +0200) System clock synchronized: yes NTP service: active RTC in local TZ: no2.2. Configuring the system locale

System-wide locale settings are stored in the /etc/locale.conf file, which is read early boot by the systemd daemon. Every service or user inherits the locale settings configured in /etc/locale.conf, unless individual programs or individual users override them.

This section describes how to manage system locale.

Procedure

To list available system locale settings:

$ localectl list-locales C.utf8 aa_DJ aa_DJ.iso88591 aa_DJ.utf8 ...To display the current status of the system locales settings:

$ localectl statusTo set or change the default system locale settings, use a localectl set-locale sub-command as the root user. For example:

# localectl set-locale LANG=en_USAdditional resources

- man localectl(1), man locale(7), and man locale.conf(5)

2.3. Configuring the keyboard layout

The keyboard layout settings control the layout used on the text console and graphical user interfaces.

Procedure

To list available keymaps:

$ localectl list-keymaps ANSI-dvorak al al-plisi amiga-de amiga-us ...To display the current status of keymaps settings:

$ localectl status ... VC Keymap: us ...To set or change the default system keymap. For example:

# localectl set-keymap usAdditional resources

- man localectl(1), man locale(7), and man locale.conf(5)

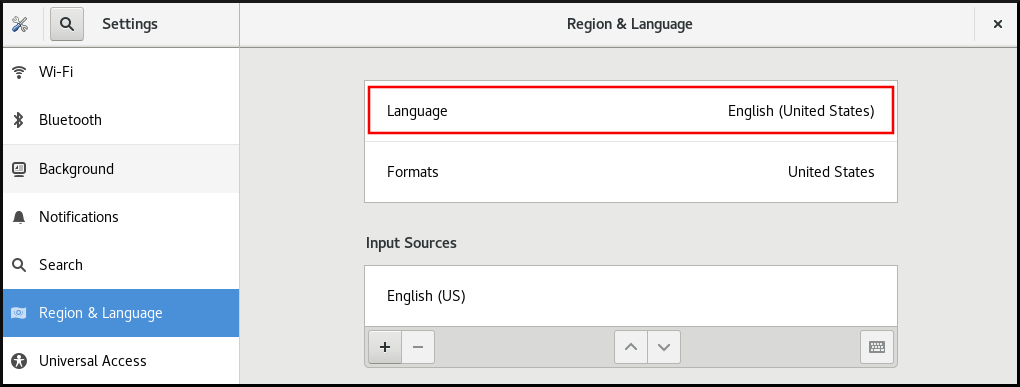

2.4. Changing the language using desktop GUI

This section describes how to change the system language using the desktop GUI.

Prerequisites

- Required language packages are installed on your system

Procedure

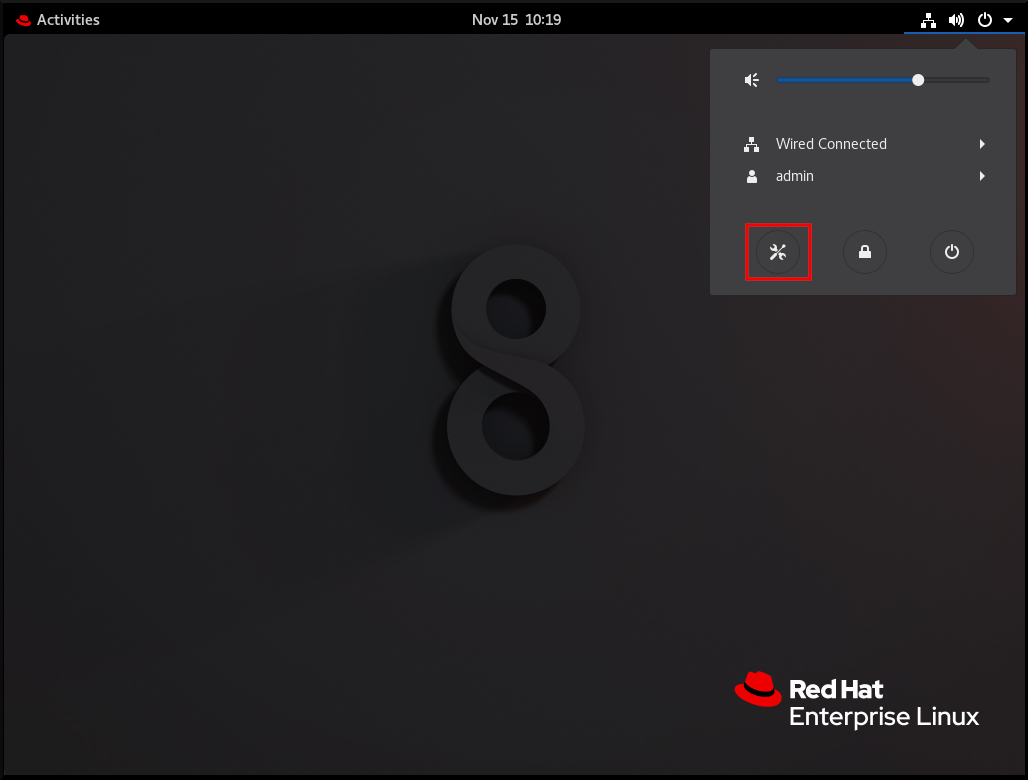

Open the GNOME Control Center from the System menu by clicking on its icon.

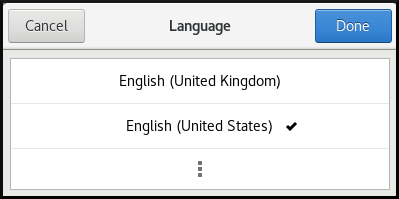

Click the Language menu.

Select the required region and language from the menu.

If your region and language are not listed, scroll down, and click More to select from available regions and languages.

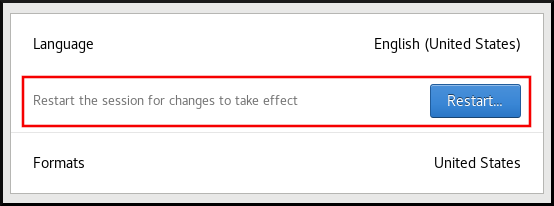

Click Restart for changes to take effect.

Some applications do not support certain languages. The text of an application that cannot be translated into the selected language remains in US English.

2.5. Additional resources

- Performing a standard RHEL 8 installation

Chapter 3. Configuring and managing network access

This section describes different options on how to add Ethernet connections in Red Hat Enterprise Linux.

3.1. Configuring the network and host name in the graphical installation mode

Follow the steps in this procedure to configure your network and host name.

Procedure

From the Installation Summary window, click Network and Host Name.From the list in the left-hand pane, select an interface. The details are displayed in the right-hand pane.

- There are several types of network device naming

standards used to identify network devices with persistent names, for example, em1 and wl3sp0. For information about these standards, see the Configuring and managing networking document.

Toggle the ON/OFF switch to enable or disable the selected interface.

The installation program automatically detects locally accessible interfaces, and you cannot add or remove them manually.

Click + to add a virtual network interface, which can be either: Team, Bond, Bridge, or VLAN. Click - to remove a virtual interface. Click Configure to change settings such as IP addresses, DNS servers, or routing configuration for an existing interface (both virtual and physical).Type a host name for your system in the Host Name field.

- The host name can be either a fully-qualified domain name (FQDN) in the format hostname.domainname, or a short host name with no domain name. Many networks have a Dynamic Host Configuration Protocol (DHCP) service that automatically supplies connected systems with a domain name. To allow the DHCP service to assign the domain name to this machine, specify only

the short host name. The value localhost means that no specific static host name for the target system is configured, and the actual host name of the installed system is configured during the processing of the network configuration, for example, by NetworkManager using DHCP or DNS. Host names can only contain alpha-numeric characters and - or .. Host names cannot start or end with - and ..

3.2. Configuring a static Ethernet connection using nmcli

This procedure describes adding an Ethernet connection with the following settings using the nmcli utility:

- A static IPv4 address - 192.0.2.1 with a /24 subnet mask A static IPv6 address - 2001:db8:1::1 with a /64 subnet mask An IPv4 default gateway - 192.0.2.254 An IPv6 default gateway - 2001:db8:1::fffe An IPv4 DNS server - 192.0.2.200 An IPv6 DNS server - 2001:db8:1::ffbb

A DNS search domain - example.com

Procedure

Add a new NetworkManager connection profile for the Ethernet connection:

# nmcli connection add con-name Example-Connection ifname enp7s0 type ethernetThe further steps modify the Example-Connection connection profile you created.

Set the IPv4 address:

# nmcli connection modify Example-Connection ipv4.addresses 192.0.2.1/24Set the IPv6 address:

# nmcli connection modify Example-Connection ipv6.addresses 2001:db8:1::1/64Set the IPv4 and IPv6 connection method to manual:

# nmcli connection modify Example-Connection ipv4.method manual # nmcli connection modify Example-Connection ipv6.method manualSet the IPv4 and IPv6 default gateways:

# nmcli connection modify Example-Connection ipv4.gateway 192.0.2.254 # nmcli connection modify Example-Connection ipv6.gateway 2001:db8:1::fffeSet the IPv4 and IPv6 DNS server addresses:

# nmcli connection modify Example-Connection ipv4.dns "192.0.2.200" # nmcli connection modify Example-Connection ipv6.dns "2001:db8:1::ffbb"To set multiple DNS servers, specify them space-separated and enclosed in quotes.

Set the DNS search domain for the IPv4 and IPv6 connection:

# nmcli connection modify Example-Connection ipv4.dns-search example.com # nmcli connection modify Example-Connection ipv6.dns-search example.comActivate the connection profile:

# nmcli connection up Example-Connection Connection successfully activated (D-Bus active path: /org/freedesktop/NetworkManager/ActiveConnection/13)Verification steps

Display the status of the devices and connections:

# nmcli device status DEVICE TYPE STATE CONNECTION enp7s0 ethernet connected Example-ConnectionTo display all settings of the connection profile:

# nmcli connection show Example-Connection connection.id: Example-Connection connection.uuid: b6cdfa1c-e4ad-46e5-af8b-a75f06b79f76 connection.stable-id: -- connection.type: 802-3-ethernet connection.interface-name: enp7s0 ...Use the ping utility to verify that this host can send packets to other hosts.

Ping an IP address in the same subnet.

For IPv4:

# ping 192.0.2.3For IPv6:

# ping 2001:db8:1::2If the command fails, verify the IP and subnet settings.

Ping an IP address in a remote subnet.

For IPv4:

# ping 198.162.3.1For IPv6:

# ping 2001:db8:2::1If the command fails, ping the default gateway to verify settings.

For IPv4:

# ping 192.0.2.254For IPv6:

# ping 2001:db8:1::fff3Use the host utility to verify that name resolution works. For example:

# host client.example.comIf the command returns any error, such as connection timed out or no servers could be reached, verify your DNS settings.

Troubleshooting steps

If the connection fails or if the network interface switches between an up and down status:

- Make sure that the network cable is plugged-in to the host and a switch. Check whether the link failure exists only on this host or also on other hosts connected to the same switch the server is connected to. Verify that the network cable and the network interface are working as expected. Perform hardware diagnosis steps and replace defect cables and network interface cards. If the configuration on the disk does not match the configuration on the device,

starting or restarting NetworkManager creates an in-memory connection that reflects the configuration of the device. For further details and how to avoid this problem, see NetworkManager duplicates a connection after restart of NetworkManager service

3.3. Adding a connection profile using nmtui

The nmtui application provides a text user interface to NetworkManager. This procedure describes how to add a new connection profile.

Prerequisites

- The NetworkManager-tui package is installed.

Procedure

Start the NetworkManager text user interface utility:

# nmtui Select the Edit a connection menu entry, and press Enter. Select the Add button, and press Enter. Select Ethernet, and press Enter.Fill the fields with the connection details.

Select OK to save the changes. Select Back to return to the main menu. Select Activate a connection, and press Enter. Select the new connection entry, and press Enter to activate the connection. Select Back to return to the main menu. Select Quit.

Select OK to save the changes. Select Back to return to the main menu. Select Activate a connection, and press Enter. Select the new connection entry, and press Enter to activate the connection. Select Back to return to the main menu. Select Quit. Verification steps

Display the status of the devices and connections:

# nmcli device status DEVICE TYPE STATE CONNECTION enp1s0 ethernet connected Example-ConnectionTo display all settings of the connection profile:

# nmcli connection show Example-Connection connection.id: Example-Connection connection.uuid: b6cdfa1c-e4ad-46e5-af8b-a75f06b79f76 connection.stable-id: -- connection.type: 802-3-ethernet connection.interface-name: enp1s0 ...If the configuration on the disk does not match the configuration on the device, starting or restarting NetworkManager creates an in-memory connection that reflects the configuration of the device. For further details and how to avoid this problem, see NetworkManager duplicates a connection after restart of NetworkManager service.

3.4. Managing networking in the RHEL web console

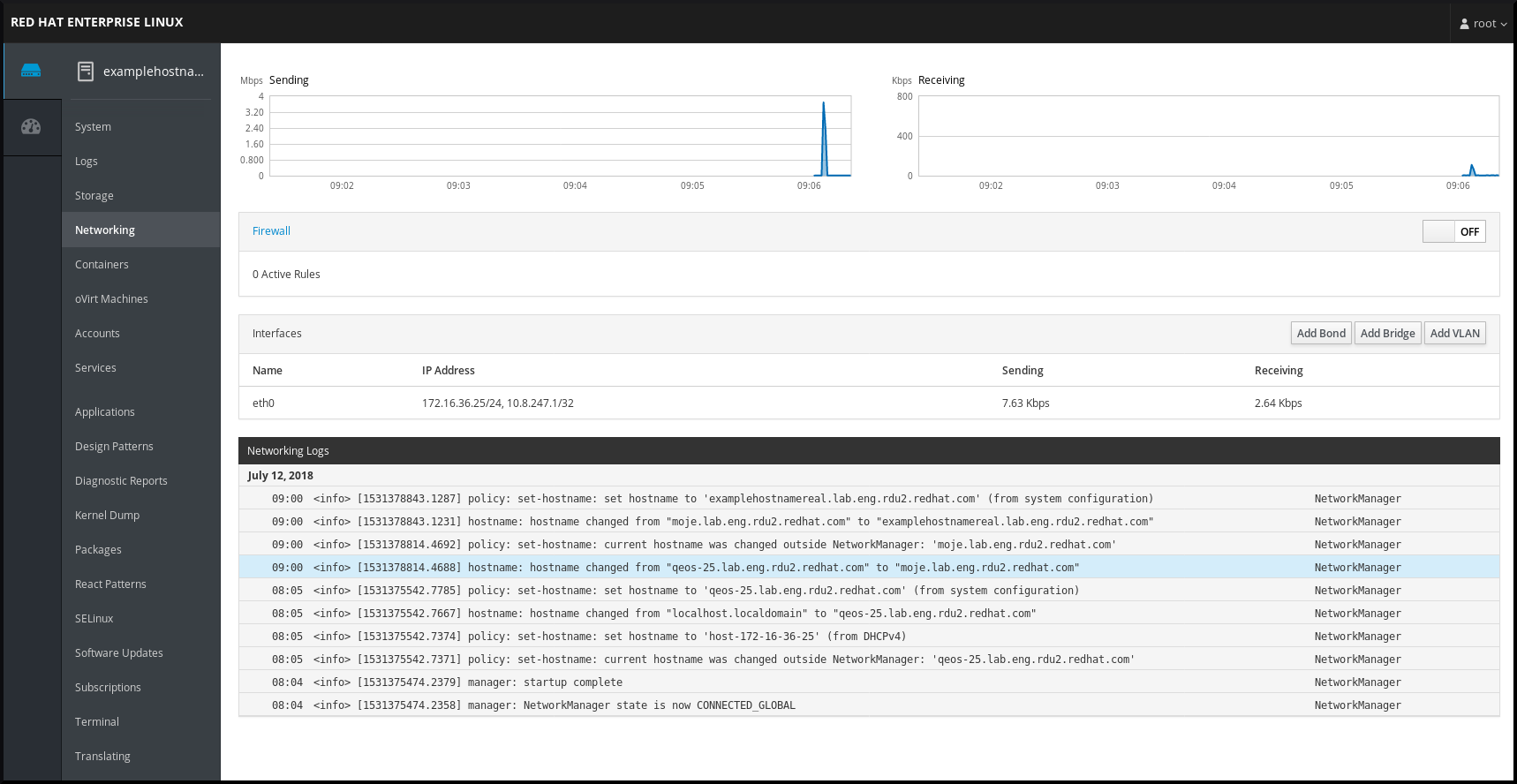

In the web console, the Networking menu enables you:

- To display currently received and sent packets To display the most important characteristics of available network interfaces

To display content of the networking logs. To add various types of network interfaces (bond, team, bridge, VLAN)

Figure 3.1. Managing Networking in the RHEL web console

3.5. Managing networking using RHEL System Roles

You can configure the networking connections on multiple target machines using the network role.

The network role allows to configure the following types of interfaces:

- Ethernet Bridge Bonded VLAN MacVLAN Infiniband

The required networking connections for each host are provided as a list within the network_connections variable.

The network role updates or creates all connection profiles on the target system exactly as specified in the network_connections variable. Therefore, the network role removes options from the specified profiles if the options are only present on the system but not in the network_connections variable.

The following example shows how to apply the network role to ensure that an Ethernet connection with the required parameters exists:

An example playbook applying the network role to set up an Ethernet connection with the required parameters

# SPDX-License-Identifier: BSD-3-Clause --- - hosts: network-test vars: network_connections: # Create one ethernet profile and activate it. # The profile uses automatic IP addressing # and is tied to the interface by MAC address. - name: prod1 state: up type: ethernet autoconnect: yes mac: "00:00:5e:00:53:00" mtu: 1450 roles: - rhel-system-roles.network3.6. Additional resources

- Configuring and managing networking

Chapter 4. Registering the system and managing subscriptions

Subscriptions cover products installed on Red Hat Enterprise Linux, including the operating system itself.

You can use a subscription to Red Hat Content Delivery Network to track:

- Registered systems Products installed on your systems Subscriptions attached to the installed products

4.1. Registering the system after the installation

Use the following procedure to register your system if you have not registered it during the installation process already.

Prerequisites

- A valid user account in the Red Hat Customer Portal. See the

Create a Red Hat Login page. An active subscription for the RHEL system. For more information about the installation process, see Performing a standard RHEL 8 installation.

Procedure

Register and automatically subscribe your system in one step:

# subscription-manager register --usernameThe command prompts you to enter your Red Hat Customer Portal user name and password.

If the registration process fails, you can register your system with a specific pool. For guidance on how to do it, proceed with the following steps:

Determine the pool ID of a subscription that you require:

# subscription-manager list --availableThis command displays all available subscriptions for your Red Hat account. For every subscription, various characteristics are displayed, including the pool ID.

Attach the appropriate subscription to your system by replacing pool_id with the pool ID determined in the previous step:

# subscription-manager attach --pool=pool_id4.2. Registering subscriptions with credentials in the web console

Use the following steps to register a newly installed Red Hat Enterprise Linux with account credentials using the RHEL web console.

Prerequisites

A valid user account on the Red Hat Customer Portal.

See the Create a Red Hat Login page.

Active subscription for your RHEL system.Procedure

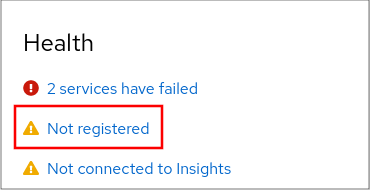

Log in to the RHEL web console. For details, see Logging in to the web console.In the Health filed in the Overview page, click the Not registered warning, or click Subscriptions in the main menu to move to page with your subscription information.

.

.

In the Overview filed, click Register.

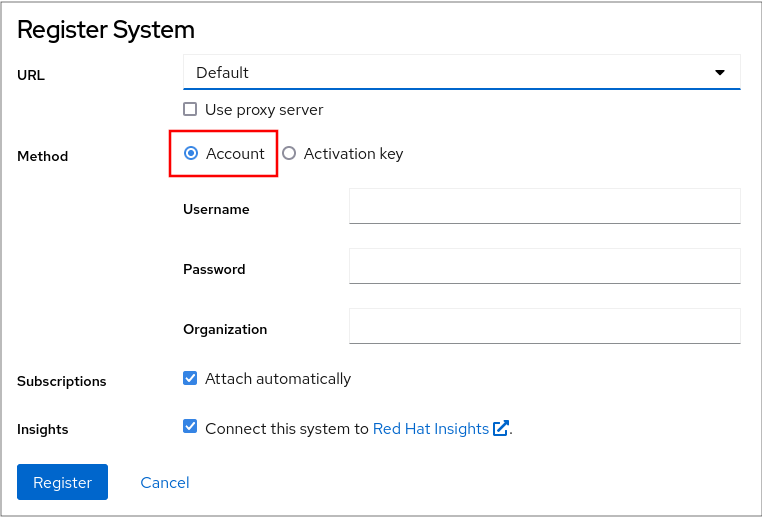

In the Register system dialog box, select that you want to register using your account credentials.

Optionally, enter your organization’s name or ID.

If your account belongs to more than one organization on the Red Hat Customer Portal, you have to add the organization name or organization ID. To get the org ID, go to your Red Hat contact point.

- If you do not want to connect your system to Red Hat Insights, uncheck the Insights check box.

At this point, your Red Hat Enterprise Linux system has been successfully registered.

4.3. Registering a system using Red Hat account on GNOME

Follow the steps in this procedure to enroll your system with your Red Hat account.

Prerequisites

A valid account on Red Hat customer portal.

See the Create a Red Hat Login page for new user registration.

Procedure

Go to the system menu, which is accessible from the top-right screen corner and click the Settings icon. In the → section, click Register. Select Registration Server. If you are not using the Red Hat server, enter the server address in the URL field. In the Registration Type menu, select Red Hat Account.Under Registration Details:

- Enter your Red Hat account user name in the Login field. Enter your Red Hat account password in the

Password field. Enter the name of your organization in the Organization field.

4.4. Registering a system using an activation key on GNOME

Follow the steps in this procedure to register your system with an activation key. You can get the activation key from your organization administrator.

Prerequisites

Activation key or keys.

See the Activation Keys page for creating new activation keys.

Procedure

Go to the system menu, which is accessible from the top-right screen corner and click the Settings icon. In the → section, click Register. Select Registration Server. Enter URL to the customized server, if you are not using the Red Hat server. In the Registration Type menu, select Activation Keys.Under Registration Details:

Enter Activation Keys.

Separate multiple keys by a comma (,).

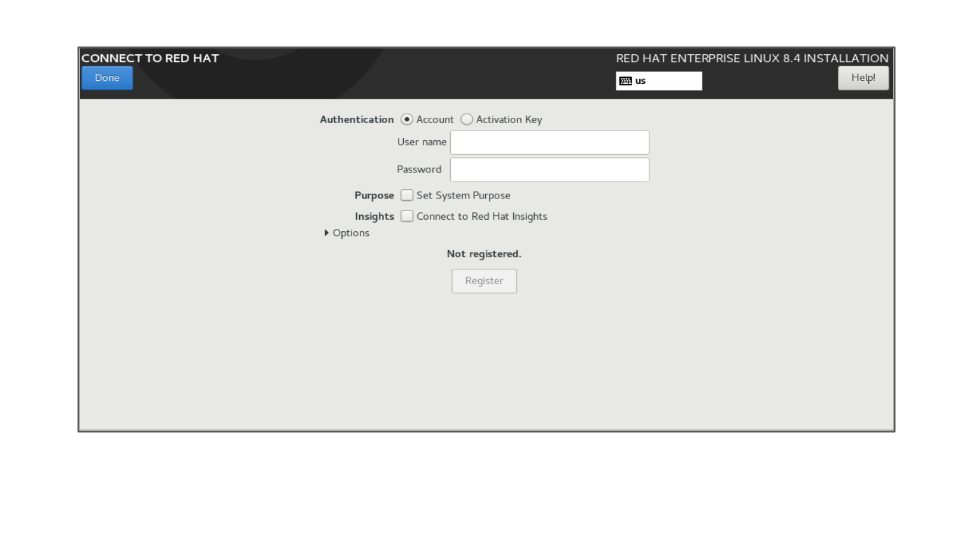

Enter the name or ID of your organization in the Organization field.4.5. Registering RHEL 8.4 using the installer GUI

Use the following steps to register a newly installed Red Hat Enterprise Linux 8.4 using the RHEL installer GUI.

Prerequisites

A valid user account on the Red Hat Customer Portal. See the Create a Red Hat Login page.

If the user account has appropriate entitlements (or the account operates in Simple Content Access mode) they can register using username and password only, without presenting an activation key.

A valid Activation Key and Organization idProcedure

Authenticate your Red Hat account using the Account or Activation Key option.

Select the Set System Purpose field and from the drop-down menu select the Role, SLA, and Usage for the RHEL 8.4 installation.

At this point, your Red Hat Enterprise Linux 8.4 system has been successfully registered.

Chapter 5. Making systemd services start boot time

systemd is a system and service manager for Linux operating systems that introduces the concept of systemd units.

This section provides information on how to ensure that a service is enabled or disabled boot time. It also explains how to manage the services through the web console.

5.1. Enabling or disabling the services

You can determine which services are enabled or disabled boot time already during the installation process. You can also enable or disable a service on an installed operating system.

This section describes the steps for enabling or disabling those services on an already installed operating system:

Prerequisites

- You must have root access to the system.

Procedure

To enable a service, use the enable option:

# systemctl enable service_nameReplace service_name with the service you want to enable.

You can also enable and start a service in a single command:

# systemctl enable --now service_nameTo disable a service, use the disable option:

# systemctl disable service_nameReplace service_name with the service you want to disable.

You cannot enable a service that has been previously masked. You have to unmask it first:

# systemctl unmask service_name5.2. Managing services in the RHEL web console

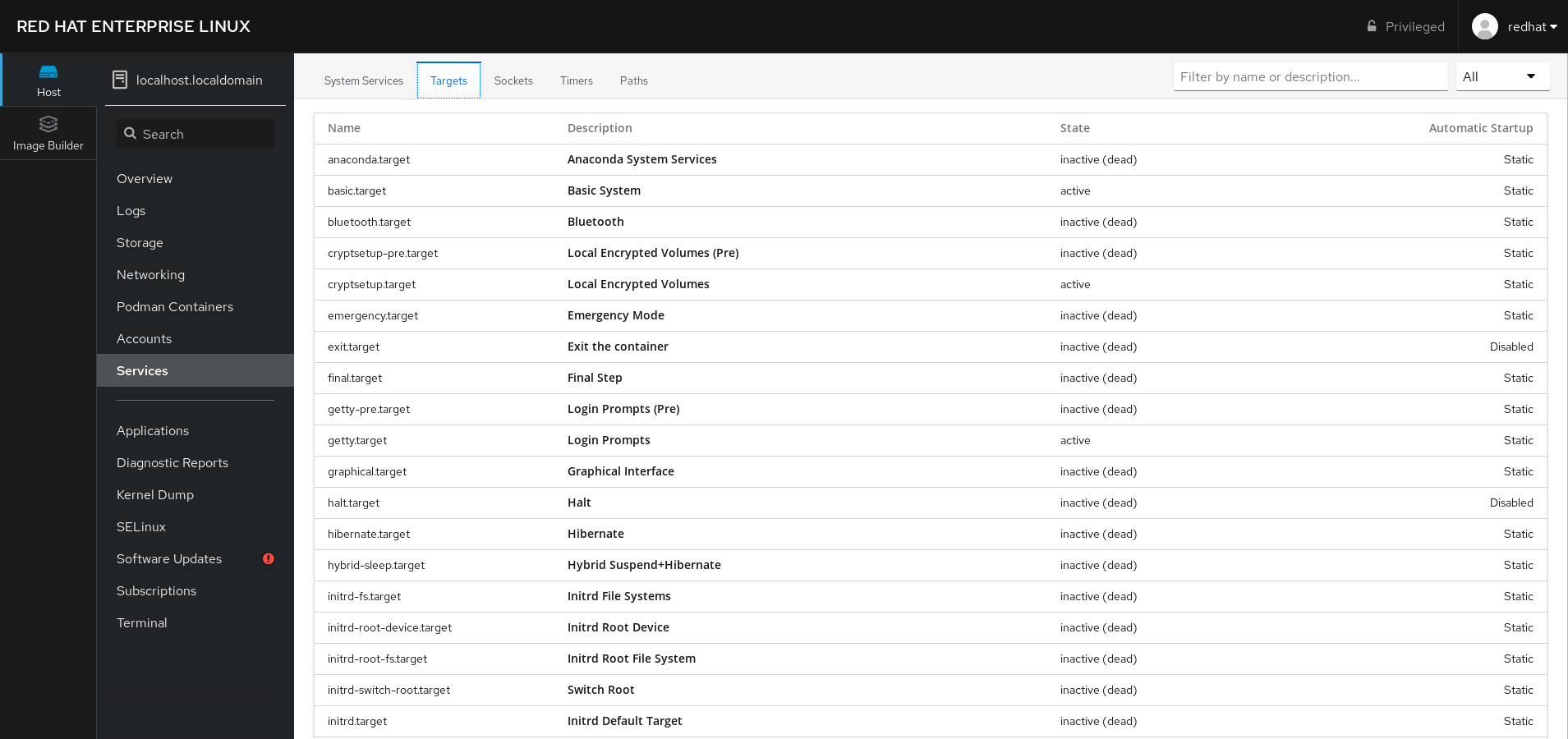

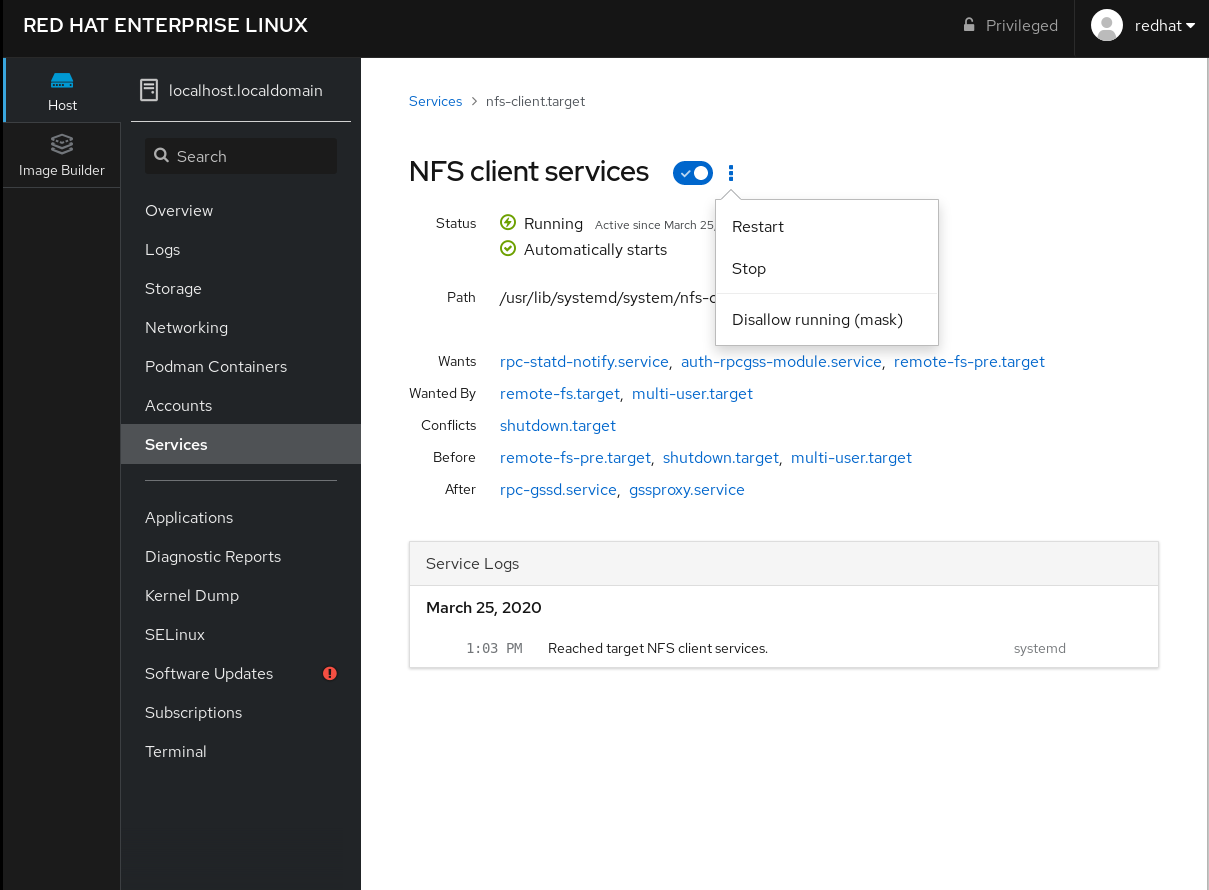

This section describes how you can also enable or disable a service using the web console. You can manage systemd targets, services, sockets, timers, and paths. You can also check the service status, start or stop services, enable or disable them.

Prerequisites

- You must have root access to the system.

Procedure

Open localhost:9090 in a web browser of your preference. Log in to the web console with your root credentials on the system.To display the web console panel, click the Host icon, which is in the upper-left corner of the window.

On the menu, click Services.

You can manage systemd targets, services, sockets, timers, and paths.

For example, to manage the service NFS client services:

Click Targets. Select the service NFS client services. To enable or disable the service, click the Toogle button.To stop the service, click the ⫶ button and choose the option Stop.

Chapter 6. Configuring system security

Computer security is the protection of computer systems and their hardware, software, information, and services from theft, damage, disruption, and misdirection. Ensuring computer security is an essential task, in particular in enterprises that process sensitive data and handle business transactions.

This section covers only the basic security features that you can configure after installation of the operating system.

6.1. Enabling the firewalld service

A firewall is a network security system that monitors and controls incoming and outgoing network traffic according to configured security rules. A firewall typically establishes a barrier between a trusted secure internal network and another outside network.

The firewalld service, which provides a firewall in Red Hat Enterprise Linux, is automatically enabled during installation.

To enable the firewalld service, follow this procedure.

Procedure

Display the current status of firewalld:

$ systemctl status firewalld ● firewalld.service - firewalld - dynamic firewall daemon Loaded: loaded (/usr/lib/systemd/system/firewalld.service; disabled; vendor preset: enabled) Active: inactive (dead) ...If firewalld is not enabled and running, switch to the root user, and start the firewalld service and enable to start it automatically after the system restarts:

# systemctl enable --now firewalldVerification steps

Check that firewalld is running and enabled:

$ systemctl status firewalld ● firewalld.service - firewalld - dynamic firewall daemon Loaded: loaded (/usr/lib/systemd/system/firewalld.service; enabled; vendor preset: enabled) Active: active (running) ...6.2. Managing firewall in the rhel 8 web console

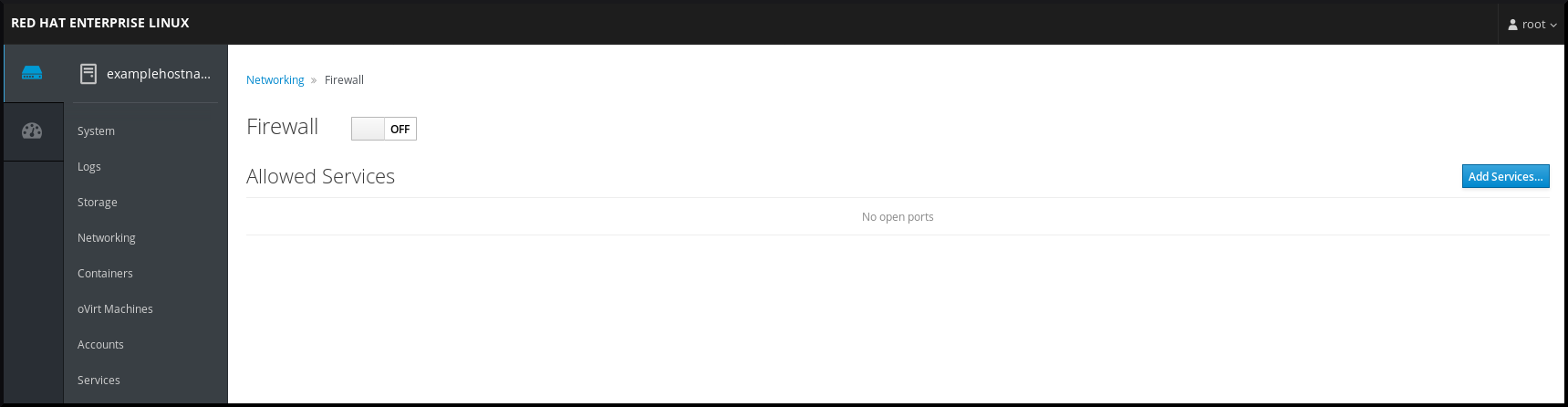

To configure the firewalld service in the web console, navigate to → .

By default, the firewalld service is enabled.

Procedure

To enable or disable firewalld in the web console, switch the Firewall toggle button.

Additionally, you can define more fine-grained access through the firewall to a service using the Add services… button.

6.3. Managing basic SELinux settings

Security-Enhanced Linux (SELinux) is an additional layer of system security that determines which processes can access which files, directories, and ports. These permissions are defined in SELinux policies. A policy is a set of rules that guide the SELinux security engine.

SELinux has two possible states:

- Disabled Enabled

When SELinux is enabled, it runs in one of the following modes:

Enabled

- Enforcing Permissive

In enforcing mode, SELinux enforces the loaded policies. SELinux denies access based on SELinux policy rules and enables only the interactions that are explicitly allowed. Enforcing mode is the safest SELinux mode and is the default mode after installation.

In permissive mode, SELinux does not enforce the loaded policies. SELinux does not deny access, but reports actions that break the rules to the /var/log/audit/audit.log log. Permissive mode is the default mode during installation. Permissive mode is also useful in some specific cases, for example when troubleshooting problems.

6.4. Ensuring the required state of selinux

By default, SELinux operates in enforcing mode. However, in specific scenarios, you can set SELinux to permissive mode or even disable it.

Red Hat recommends to keep your system in enforcing mode. For debugging purposes, you can set SELinux to permissive mode.

Follow this procedure to change the state and mode of SELinux on your system.

Procedure

Display the current SELinux mode:

$ getenforceTo temporarily set SELinux:

To Enforcing mode:

# setenforce EnforcingTo Permissive mode:

# setenforce PermissiveAfter reboot, SELinux mode is set to the value specified in the /etc/selinux/config configuration file.

To set SELinux mode to persist across reboots, modify the SELINUX variable in the /etc/selinux/config configuration file.

For example, to switch SELinux to enforcing mode:

# This file controls the state of SELinux on the system. # SELINUX= can take one of these three values: # enforcing - SELinux security policy is enforced. # permissive - SELinux prints warnings instead of enforcing. # disabled - No SELinux policy is loaded. SELINUX=enforcing ...Disabling SELinux reduces your system security. Avoid disabling SELinux using the SELINUX=disabled option in the /etc/selinux/config file because this can result in memory leaks and race conditions causing kernel panics. Instead, disable SELinux by adding the selinux=0 parameter to the kernel command line. For more information, see Changing SELinux modes boot time.

6.5. Switching SELinux modes in the RHEL 8 web console

You can set SELinux mode through the RHEL 8 web console in the SELinux menu item.

By default, SELinux enforcing policy in the web console is on, and SELinux operates in enforcing mode. By turning it off, you switch SELinux to permissive mode. Note that this selection is automatically reverted on the next boot to the configuration defined in the /etc/sysconfig/selinux file.

Procedure

In the web console, use the Enforce policy toggle button in the SELinux menu item to turn SELinux enforcing policy on or off.

6.6. Additional resources

- Generating SSH key pairs

Setting an OpenSSH server for key-based authentication

Security hardening Using SELinux Securing networks

Deploying the same SELinux configuration on multiple systems

Chapter 7. Getting started with managing user accounts

Red Hat Enterprise Linux is a multi-user operating system, which enables multiple users on different computers to access a single system installed on one machine. Every user operates under its own account, and managing user accounts thus represents a core element of Red Hat Enterprise Linux system administration.

The following are the different types of user accounts:

Normal user accounts:

Normal accounts are created for users of a particular system. Such accounts can be added, removed, and modified during normal system administration.

System user accounts:

System user accounts represent a particular applications identifier on a system. Such accounts are generally added or manipulated only software installation time, and they are not modified later.

System accounts are presumed to be available locally on a system. If these accounts are configured and provided remotely, such as in the instance of an LDAP configuration, system breakage and service start failures can occur.

For system accounts, user IDs below 1000 are reserved. For normal accounts, you can use IDs starting 1000. However, the recommended practice is to assign IDs starting 5000. For assigning IDs, see the /etc/login.defs file.

Group:

A group is an entity which ties together multiple user accounts for a common purpose, such as granting access to particular files.

7.1. Managing accounts and groups using command line tools

This section describes basic command-line tools to manage user accounts and groups.

To display user and group IDs:

$ id uid=1000(example.user) gid=1000(example.user) groups=1000(example.user),10(wheel) context=unconfined_u:unconfined_r:unconfined_t:s0-s0:c0.c1023To create a new user account:

# useradd example.userTo assign a new password to a user account belonging to example.user:

# passwd example.userTo add a user to a group:

# usermod -a -G example.group example.userAdditional resources

- man useradd(8), man passwd(1), and man usermod(8)

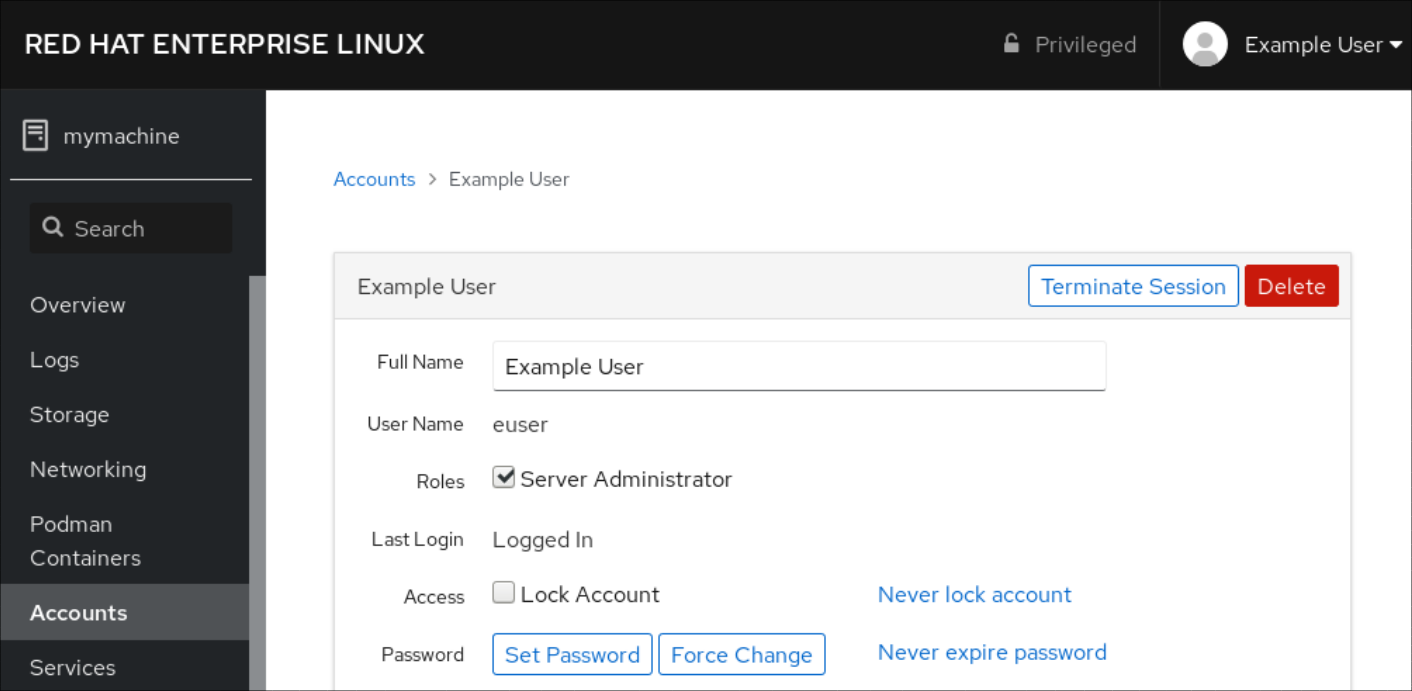

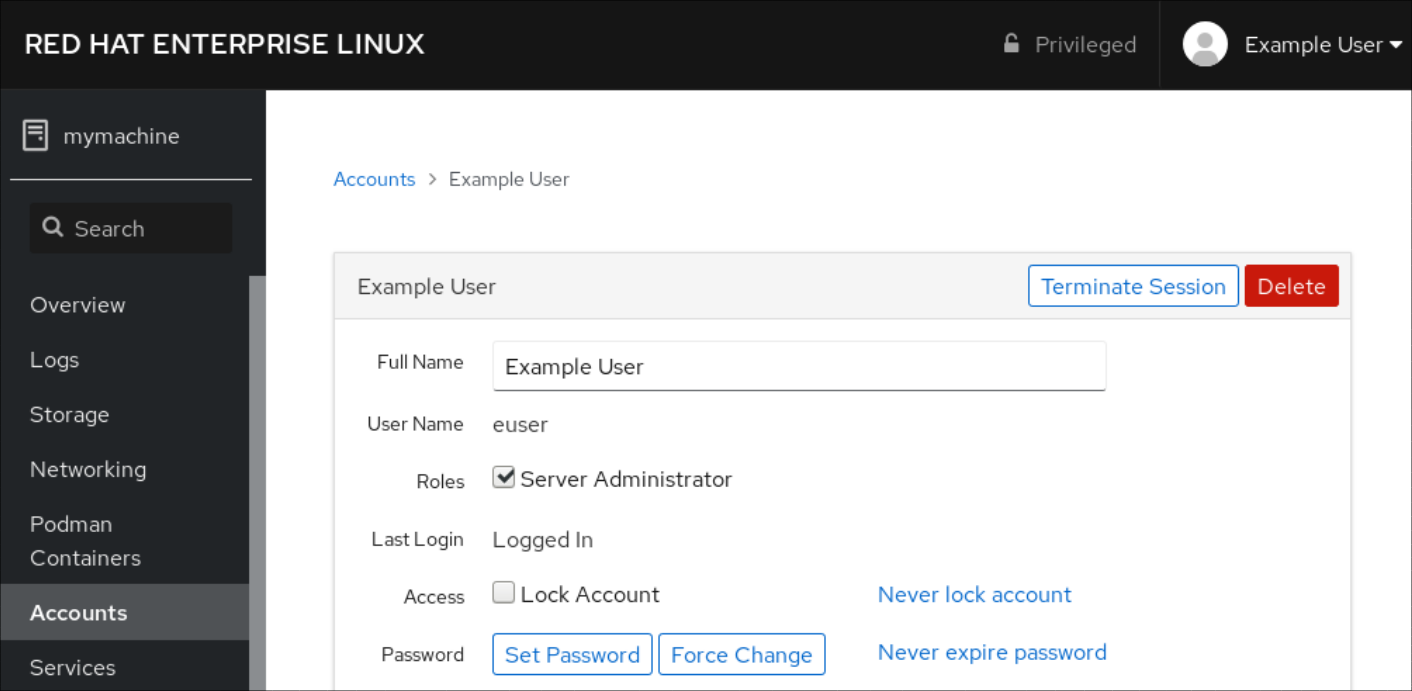

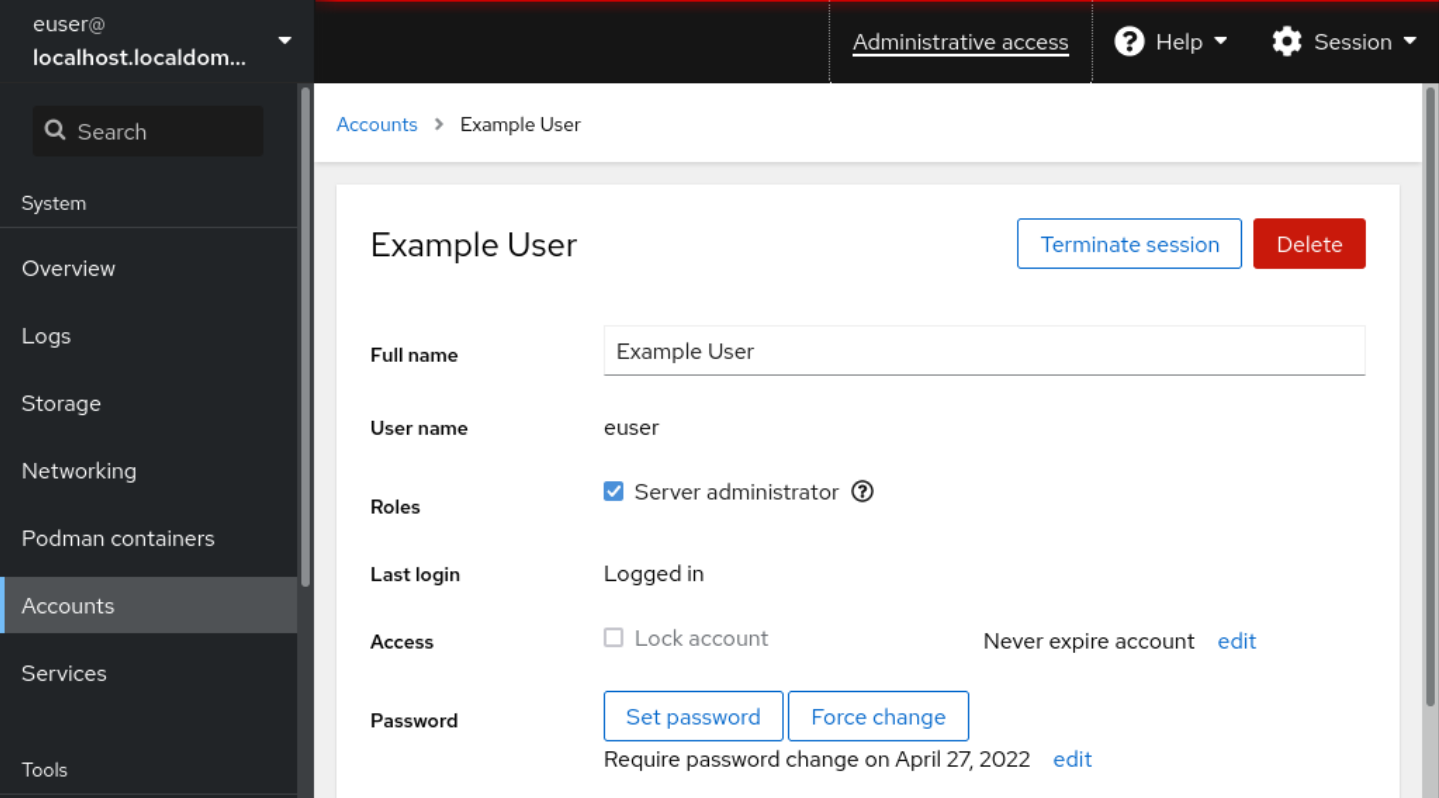

7.2. System user accounts managed in the web console

With user accounts displayed in the RHEL web console you can:

- Authenticate users when accessing the system. Set the access rights to the system.

The RHEL web console displays all user accounts located in the system. Therefore, you can see least one user account just after the first login to the web console.

After logging into the RHEL web console, you can perform the following operations:

- Create new users accounts. Change their parameters. Lock accounts. Terminate user sessions.

7.3. Adding new accounts using the web console

Use the following steps for adding user accounts to the system and setting administration rights to the accounts through the RHEL web console.

Procedure

Log in to the RHEL web console. Click Accounts. Click Create New Account.In the Full Name field, enter the full name of the user.

The RHEL web console automatically suggests a user name from the full name and fills it in the User Name field. If you do not want to use the original naming convention consisting of the first letter of the first name and the whole surname, update the suggestion.

In the Password/Confirm fields, enter the password and retype it for verification that your password is correct.

The color bar placed below the fields shows you security level of the entered password, which does not allow you to create a user with a weak password.

Click Create to save the settings and close the dialog box. Select the newly created account.Select Server Administrator in the Roles item.

Now you can see the new account in the Accounts settings and you can use the credentials to connect to the system.

Chapter 8. Dumping a crashed kernel for later analysis

To analyze why a system crashed, you can use the kdump service to save the contents of the system’s memory for later analysis. This section provides a brief introduction to kdump, and information about configuring kdump using the RHEL web console or using the corresponding RHEL system role.

8.1. What is kdump

kdump is a service which provides a crash dumping mechanism. The service enables you to save the contents of the system memory for analysis. kdump uses the kexec system call to boot into the second kernel (a capture kernel) without rebooting; and then captures the contents of the crashed kernel’s memory (a crash dump or a vmcore) and saves it into a file. The second kernel resides in a reserved part of the system memory.

A kernel crash dump can be the only information available in the sự kiện of a system failure (a critical bug). Therefore, operational kdump is important in mission-critical environments. Red Hat advise that system administrators regularly update and test kexec-tools in your normal kernel update cycle. This is especially important when new kernel features are implemented.

You can enable kdump for all installed kernels on a machine or only for specified kernels. This is useful when there are multiple kernels used on a machine, some of which are stable enough that there is no concern that they could crash.

When kdump is installed, a default /etc/kdump.conf file is created. The file includes the default minimum kdump configuration. You can edit this file to customize the kdump configuration, but it is not required.

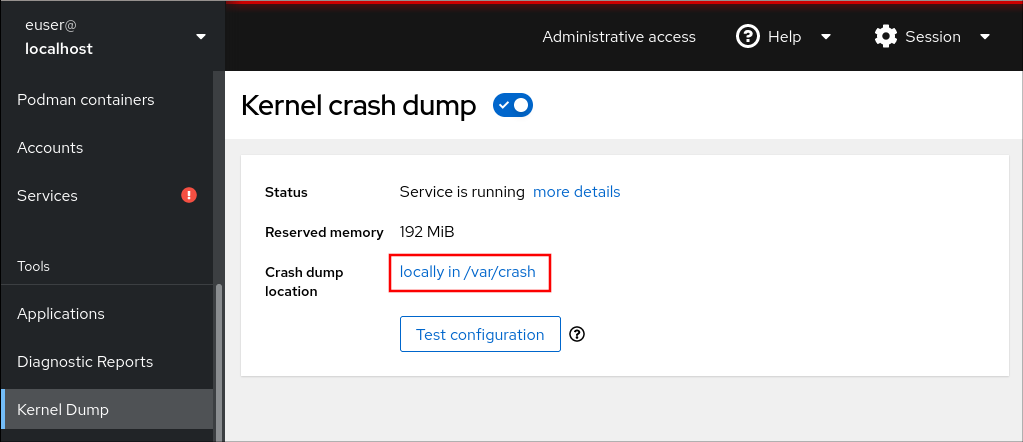

8.2. Configuring kdump memory usage and target location in web console

The procedure below shows you how to use the Kernel Dump tab in the RHEL web console interface to configure the amount of memory that is reserved for the kdump kernel. The procedure also describes how to specify the target location of the vmcore dump file and how to test your configuration.

Procedure

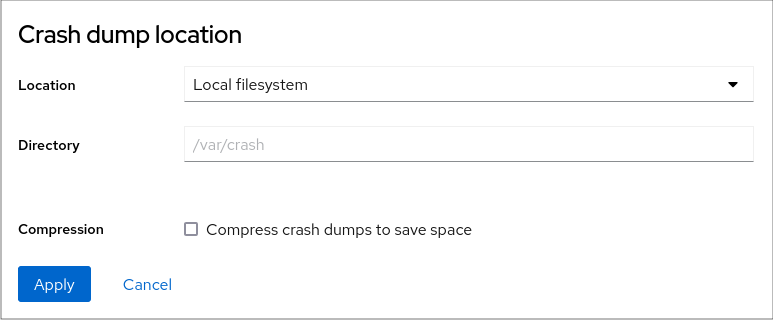

Open the Kernel Dump tab and start the kdump service. Configure the kdump memory usage using the command line.Click the link next to the Crash dump location option.

Select the Local Filesystem option from the drop-down and specify the directory you want to save the dump in.

Alternatively, select the Remote over SSH option from the drop-down to send the vmcore to a remote machine using the SSH protocol.

Fill the Server, ssh key, and Directory fields with the remote machine address, ssh key location, and a target directory.

Another choice is to select the Remote over NFS option from the drop-down and fill the Mount field to send the vmcore to a remote machine using the NFS protocol.

Tick the Compression check box to reduce the size of the vmcore file.

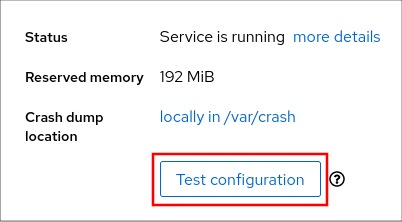

Test your configuration by crashing the kernel.

Click Test configuration.

Click Test configuration. In the Test kdump settings field, click Crash system.

This step disrupts execution of the kernel and results in a system crash and loss of data.

8.3. kdump using RHEL System Roles

RHEL System Roles is a collection of Ansible roles and modules that provide a consistent configuration interface to remotely manage multiple RHEL systems. The kdump role enables you to set basic kernel dump parameters on multiple systems.

The kdump role replaces the kdump configuration of the managed hosts entirely by replacing the /etc/kdump.conf file. Additionally, if the kdump role is applied, all previous kdump settings are also replaced, even if they are not specified by the role variables, by replacing the /etc/sysconfig/kdump file.

The following example playbook shows how to apply the kdump system role to set the location of the crash dump files:

--- - hosts: kdump-test vars: kdump_path: /var/crash roles: - rhel-system-roles.kdumpFor a detailed reference on kdump role variables, install the rhel-system-roles package, and see the README.md or README.html files in the /usr/share/doc/rhel-system-roles/kdump directory.

8.4. Additional resources

- Installing kdump

Configuring kdump on the command line

Configuring kdump in the web console

Chapter 9. Recovering and restoring a system

To recover and restore a system using an existing backup, Red Hat Enterprise Linux provides the Relax-and-Recover (ReaR) utility.

You can use the utility as a disaster recovery solution and also for system migration.

The utility enables you to perform the following tasks:

- Produce a bootable image and restore the system from an existing backup, using the image. Replicate the original storage layout. Restore user and system files.

Restore the system to a different hardware.

Additionally, for disaster recovery, you can also integrate certain backup software with ReaR.

Setting up ReaR involves the following high-level steps:

Install ReaR. Modify ReaR configuration file, to add backup method details. Create rescue system. Generate backup files.9.1. Setting up ReaR

Use the following steps to install the package for using the Relax-and-Recover (ReaR) utility, create a rescue system, configure and generate a backup.

Prerequisites

Necessary configurations as per the backup restore plan are ready.

Note that you can use the NETFS backup method, a fully-integrated and built-in method with ReaR.

Procedure

Install the ReaR utility by running the following command:

# yum install rearModify the ReaR configuration file in an editor of your choice, for example:

# vi /etc/rear/local.confAdd the backup setting details to /etc/rear/local.conf. For example, in the case of the NETFS backup method, add the following lines:

BACKUP=NETFS BACKUP_URL=backup.locationReplace backup.location by the URL of your backup location.

To configure ReaR to keep the previous backup archive when the new one is created, also add the following line to the configuration file:

NETFS_KEEP_OLD_BACKUP_COPY=yTo make the backups incremental, meaning that only the changed files are backed up on each run, add the following line:

BACKUP_TYPE=incrementalCreate a rescue system:

# rear mkrescueTake a backup as per the restore plan. For example, in the case of the NETFS backup method, run the following command:

# rear mkbackuponlyAlternatively, you can create the rescue system and the backup in a single step by running the following command:

# rear mkbackupThis command combines the functionality of the rear mkrescue and rear mkbackuponly commands.

9.2. Using a ReaR rescue image on the 64-bit IBM Z architecture

Basic Relax and Recover (ReaR) functionality is now available on the 64-bit IBM Z architecture as a Technology Preview. You can create a ReaR rescue image on IBM Z only in the z/VM environment. Backing up and recovering logical partitions (LPARs) has not been tested.

ReaR on the 64-bit IBM Z architecture is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process. For more information about the support scope of Red Hat Technology Preview features, see https://access.redhat.com/support/offerings/techpreview.

The only output method currently available is Initial Program Load (IPL). IPL produces a kernel and an initial ramdisk (initrd) that can be used with the zIPL bootloader.

Prerequisites

ReaR is installed.

- To install ReaR, run the yum install rear command

Procedure

Add the following variables to the /etc/rear/local.conf to configure ReaR for producing a rescue image on the 64-bit IBM Z architecture:

To configure the IPL output method, add OUTPUT=IPL.

To configure the backup method and destination, add BACKUP and BACKUP_URL variables. For example:

BACKUP=NETFS BACKUP_URL=nfs://The local backup storage is currently not supported on the 64-bit IBM Z architecture.

Optionally, you can also configure the OUTPUT_URL variable to save the kernel and initrd files. By default, the OUTPUT_URL is aligned with BACKUP_URL.To perform backup and rescue image creation:

rear mkbackup This creates the kernel and initrd files the location specified by the BACKUP_URL or OUTPUT_URL (if set) variable, and a backup using the specified backup method. To recover the system, use the ReaR kernel and initrd files created in step 3, and boot from a Direct Attached Storage Device (DASD) or a Fibre Channel Protocol (FCP)-attached SCSI device prepared with the zipl boot loader, kernel, and initrd. For more information, see Using a Prepared DASD. When the rescue kernel and initrd get booted, it starts the ReaR rescue environment. Proceed with system recovery.Currently, the rescue process reformats all the DASDs (Direct Attached Storage Devices) connected to the system. Do not attempt a system recovery if there is any valuable data present on the system storage devices. This also includes the device prepared with the zipl bootloader, ReaR kernel, and initrd that were used to boot into the rescue environment. Ensure to keep a copy.

Chapter 10. Troubleshooting problems using log files

Log files contain messages about the system, including the kernel, services, and applications running on it. These contain information that helps troubleshoot issues or monitor system functions. The logging system in Red Hat Enterprise Linux is based on the built-in syslog protocol. Particular programs use this system to record events and organize them into log files, which are useful when auditing the operating system and troubleshooting various problems.

10.1. Services handling syslog messages

The following two services handle syslog messages:

- The systemd-journald daemon The Rsyslog service

The systemd-journald daemon collects messages from various sources and forwards them to Rsyslog for further processing. The systemd-journald daemon collects messages from the following sources:

- Kernel Early stages of the boot process Standard and error output of daemons as they start up and run Syslog

The Rsyslog service sorts the syslog messages by type and priority and writes them to the files in the /var/log directory. The /var/log directory persistently stores the log messages.

10.2. Subdirectories storing syslog messages

The following subdirectories under the /var/log directory store syslog messages.

- /var/log/messages - all syslog messages except the following /var/log/secure - security and authentication-related messages and errors /var/log/maillog - mail server-related messages and errors

/var/log/cron - log files related to periodically executed tasks /var/log/boot.log - log files related to system startup

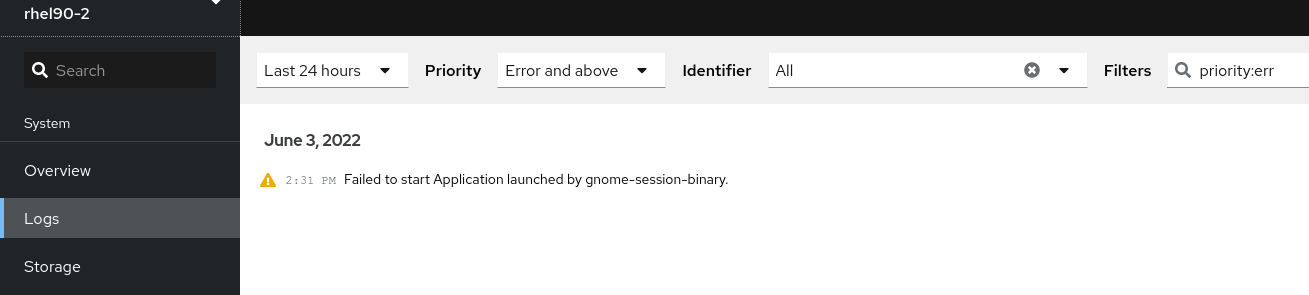

10.3. Inspecting log files using the web console

Follow the steps in this procedure to inspect the log files using the RHEL web console.

Figure 10.1. Inspecting the log files in the RHEL 8 web console

10.4. Viewing logs using the command line

The Journal is a component of systemd that helps to view and manage log files. It addresses problems connected with traditional logging, closely integrated with the rest of the system, and supports various logging technologies and access management for the log files.

You can use the journalctl command to view messages in the system journal using the command line, for example:

$ journalctl -b | grep kvm May 15 11:31:41 localhost.localdomain kernel: kvm-clock: Using msrs 4b564d01 and 4b564d00 May 15 11:31:41 localhost.localdomain kernel: kvm-clock: cpu 0, msr 76401001, primary cpu clock ...Table 10.1. Viewing system information

CommandDescription

journalctl

Shows all collected journal entries.

journalctl FILEPATH

Shows logs related to a specific file. For example, the journalctl /dev/sda command displays logs related to the /dev/sda file system.

journalctl -b

Shows logs for the current boot.

journalctl -k -b -1

Shows kernel logs for the current boot.

Table 10.2. Viewing information on specific services

CommandDescription

journalctl -b _SYSTEMD_UNIT=foo

Filters log to see ones matching the "foo" systemd service.

journalctl -b _SYSTEMD_UNIT=foo _PID=number

Combines matches. For example, this command shows logs for systemd-units that match foo and the PID number.

journalctl -b _SYSTEMD_UNIT=foo _PID=number + _SYSTEMD_UNIT=foo1

The separator “+” combines two expressions in a logical OR. For example, this command shows all messages from the foo service process with the PID plus all messages from the foo1 service (from any of its processes).

journalctl -b _SYSTEMD_UNIT=foo _SYSTEMD_UNIT=foo1

This command shows all entries matching either expression, referring to the same field. Here, this command shows logs matching a systemd-unit foo or a systemd-unit foo1.

Table 10.3. Viewing logs related to specific boots

CommandDescription

journalctl --list-boots

Shows a tabular list of boot numbers, their IDs, and the timestamps of the first and last message pertaining to the boot. You can use the ID in the next command to view detailed information.

journalctl --boot=ID _SYSTEMD_UNIT=foo

Shows information about the specified boot ID.

10.5. Additional resources

- man journalctl(1) Configuring a remote logging solution

Chapter 11. Accessing the Red Hat support

This section describes how to effectively troubleshoot your problems using Red Hat support and sosreport.

To obtain support from Red Hat, use the Red Hat Customer Portal, which provides access to everything available with your subscription.

11.1. Obtaining Red Hat support through Red Hat Customer Portal

The following section describes how to use the Red Hat Customer Portal to get help.

Prerequisites

- A valid user account on the Red Hat Customer Portal. See

Create a Red Hat Login. An active subscription for the RHEL system.

Procedure

Access Red Hat support:

Open a new support case. Initiate a live chat with a Red Hat expert. Contact a Red Hat expert by making a call or sending an email.11.2. Troubleshooting problems using sosreport

The sosreport command collects configuration details, system information and diagnostic information from a Red Hat Enterprise Linux system.

The following section describes how to use the sosreport command to produce reports for your support cases.

Prerequisites

- A valid user account on the Red Hat Customer Portal. See Create a Red Hat Login. An active subscription for the RHEL system. A support-case number.

Procedure

Install the sos package:

# yum install sosThe default minimal installation of Red Hat Enterprise Linux does not include the sos package, which provides the sosreport command.

Generate a report:

# sosreportAttach the report to your support case.

See the How can I attach a file to a Red Hat support case? Red Hat Knowledgebase article for more information.

Note that when attaching the report, you are prompted to enter the number of the relevant support case.

Chapter 12. Managing software packages

12.1. Software management tools in RHEL 8

In RHEL 8, software installation is enabled by the new version of the YUM tool (YUM v4), which is based on the DNF technology.

Upstream documentation identifies the technology as DNF and the tool is referred to as DNF in the upstream. As a result, some output returned by the new YUM tool in RHEL 8 mentions DNF.

Although YUM v4 used in RHEL 8 is based on DNF, it is compatible with YUM v3 used in RHEL 7. For software installation, the yum command and most of its options work the same way in RHEL 8 as they did in RHEL 7.

Selected yum plug-ins and utilities have been ported to the new DNF back end, and can be installed under the same names as in RHEL 7. Packages also provide compatibility symlinks, so the binaries, configuration files, and directories can be found in usual locations.

Note that the legacy Python API provided by YUM v3 is no longer available. You can migrate your plug-ins and scripts to the new API provided by YUM v4 (DNF Python API), which is stable and fully supported. See DNF API Reference for more information.

12.2. Application streams

RHEL 8 introduces the concept of Application Streams. Multiple versions of user space components are now delivered and updated more frequently than the core operating system packages. This provides greater flexibility to customize Red Hat Enterprise Linux without impacting the underlying stability of the platform or specific deployments.

Components made available as Application Streams can be packaged as modules or RPM packages, and are delivered through the AppStream repository in RHEL 8. Each Application Stream has a given life cycle, either the same as RHEL 8 or shorter, more suitable to the particular application. Application Streams with a shorter life cycle are listed in the Red Hat Enterprise Linux 8 Application Streams Life Cycle page.

Modules are collections of packages representing a logical unit: an application, a language stack, a database, or a set of tools. These packages are built, tested, and released together.

Module streams represent versions of the Application Stream components. For example, two streams (versions) of the PostgreSQL database server are available in the postgresql module: PostgreSQL 10 (the default stream) and PostgreSQL 9.6. Only one module stream can be installed on the system. Different versions can be used in separate containers.

Detailed module commands are described in the Installing, managing, and removing user-space components document. For a list of modules available in AppStream, see the Package manifest.

12.3. Searching for software packages

yum allows you to perform a complete set of operations with software packages.

The following section describes how to use yum to:

- Search for packages. List packages. List repositories. Display information about the packages. List package groups. Specify global expressions in yum input.

12.3.1. Searching packages with YUM

Use the following procedure to find a package providing a particular application or other content.

Procedure

To search for a package, use:

# yum search termReplace term with a term related to the package.

Note that yum search command returns term matches within the name and summary of the packages. This makes the search faster and enables you to search for packages you do not know the name of, but for which you know a related term.

To include term matches within package descriptions, use:

# yum search --all termReplace term with a term you want to search for in a package name, summary, or description.

Note that yum search --all enables a more exhaustive but slower search.

12.3.2. Listing packages with YUM

Use the following procedure to list installed and available packages.

Procedure

To list information on all installed and available packages, use:

# yum list --allTo list all packages installed on your system, use:

# yum list --installedTo list all packages in all enabled repositories that are available to install, use:

# yum list --availableNote that you can filter the results by appending global expressions as arguments. See Specifying global expressions in yum input for more details.

12.3.3. Listing repositories with YUM

Use the following procedure to list enabled and disabled repositories.

Procedure

To list all enabled repositories on your system, use:

# yum repolistTo list all disabled repositories on your system, use:

# yum repolist --disabledTo list both enabled and disabled repositories, use:

# yum repolist --allTo list additional information about the repositories, use:

# yum repoinfoNote that you can filter the results by passing the ID or name of repositories as arguments or by appending global expressions. See Specifying global expressions in yum input for more details.

12.3.4. Displaying package information with YUM

You can display various types of information about a package using YUM, for example version, release, size, loaded plugins, and more.

Procedure

To display information about one or more packages, use:

# yum info package-nameReplace package-name with the name of the package.

Note that you can filter the results by appending global expressions as arguments. See Specifying global expressions in yum input for more details.

12.3.5. Listing package groups with YUM

Use yum to view installed package groups and filter the listing results.

Procedure

To view the number of installed and available groups, use:

# yum group summaryTo list all installed and available groups, use:

# yum group listNote that you can filter the results by appending command line options for the yum group list command (--hidden, --available). For more available options see the man pages.

To list mandatory and optional packages contained in a particular group, use:

# yum group info group-nameReplace group-name with the name of the group.

Note that you can filter the results by appending global expressions as arguments. See Specifying global expressions in yum input for more details.

12.3.6. Specifying global expressions in YUM input

yum commands allow you to filter the results by appending one or more glob expressions as arguments. You have to escape global expressions when passing them as arguments to the yum command.

Procedure

To ensure global expressions are passed to yum as intended, use one of the following methods:

Double-quote or single-quote the entire global expression.

# yum provides "*/file-name"Replace file-name with the name of the file.

Escape the wildcard characters by preceding them with a backslash () character.

# yum provides */file-nameReplace file-name with the name of the file.

12.4. Installing software packages

The following section describes how to use yum to:

- Install packages. Install a package group. Specify a package name in yum input.

12.4.1. Installing packages with YUM

To install a package and all the package dependencies, use:

# yum install package-nameReplace package-name with the name of the package.

To install multiple packages and their dependencies simultaneously, use:

# yum install package-name-1 package-name-2Replace package-name-1 and package-name-2 with the names of the packages.

When installing packages on a multilib system (AMD64, Intel 64 machine), you can specify the architecture of the package by appending it to the package name:

# yum install package-name.archReplace package-name.arch with the name and architecture of the package.

If you know the name of the binary you want to install, but not the package name, you can use the path to the binary as an argument:

# yum install /usr/sbin/binary-fileReplace /usr/sbin/binary-file with a path to the binary file.

yum searches through the package lists, finds the package which provides /usr/sbin/binary-file, and prompts you as to whether you want to install it.

To install a previously-downloaded package from a local directory, use:

# yum install /path/Replace /path/ with the path to the package.

Note that you can optimize the package search by explicitly defining how to parse the argument. See Section 12.4.3, “Specifying a package name in YUM input” for more details.

12.4.2. Installing a package group with YUM

The following procedure describes how to install a package group by a group name or by a groupID using yum.

Procedure

To install a package group by a group name, use:

# yum group install group-nameOr

# yum install @group-nameReplace group-name with the full name of the group or environmental group.

To install a package group by the groupID, use:

# yum group install groupIDReplace groupID with the ID of the group.

12.4.3. Specifying a package name in YUM input

To optimize the installation and removal process, you can append -n, -na, or -nerva suffixes to yum install and yum remove commands to explicitly define how to parse an argument:

To install a package using its exact name, use:

# yum install-n nameReplace name with the exact name of the package.

To install a package using its exact name and architecture, use:

# yum install-na name.architectureReplace name and architecture with the exact name and architecture of the package.

To install a package using its exact name, epoch, version, release, and architecture, use:

# yum install-nevra name-epoch:version-release.architectureReplace name, epoch, version, release, and architecture with the exact name, epoch, version, release, and architecture of the package.

12.5. Updating software packages

yum allows you to check if your system has any pending updates. You can list packages that need updating and choose to update a single package, multiple packages, or all packages once. If any of the packages you choose to update have dependencies, they are updated as well.

The following section describes how to use yum to:

- Check for updates. Update a single package. Update a package group. Update all packages and their dependencies. Apply security

updates. Automate software updates.

12.5.1. Checking for updates with YUM

The following procedure describes how to check the available updates for packages installed on your system using yum.

Procedure

To see which packages installed on your system have available updates, use:

# yum check-updateThe output returns the list of packages and their dependencies that have an update available.

12.5.2. Updating a single package with YUM

Use the following procedure to update a single package and its dependencies using yum.

To update a package, use:

# yum update package-nameReplace package-name with the name of the package.

When applying updates to kernel, yum always installs a new kernel regardless of whether you are using the yum update or yum install command.

12.5.3. Updating a package group with YUM

Use the following procedure to update a group of packages and their dependencies using yum.

Procedure

To update a package group, use:

# yum group update group-nameReplace group-name with the name of the package group.

12.5.4. Updating all packages and their dependencies with YUM

Use the following procedure to update all packages and their dependencies using yum.

Procedure

To update all packages and their dependencies, use:

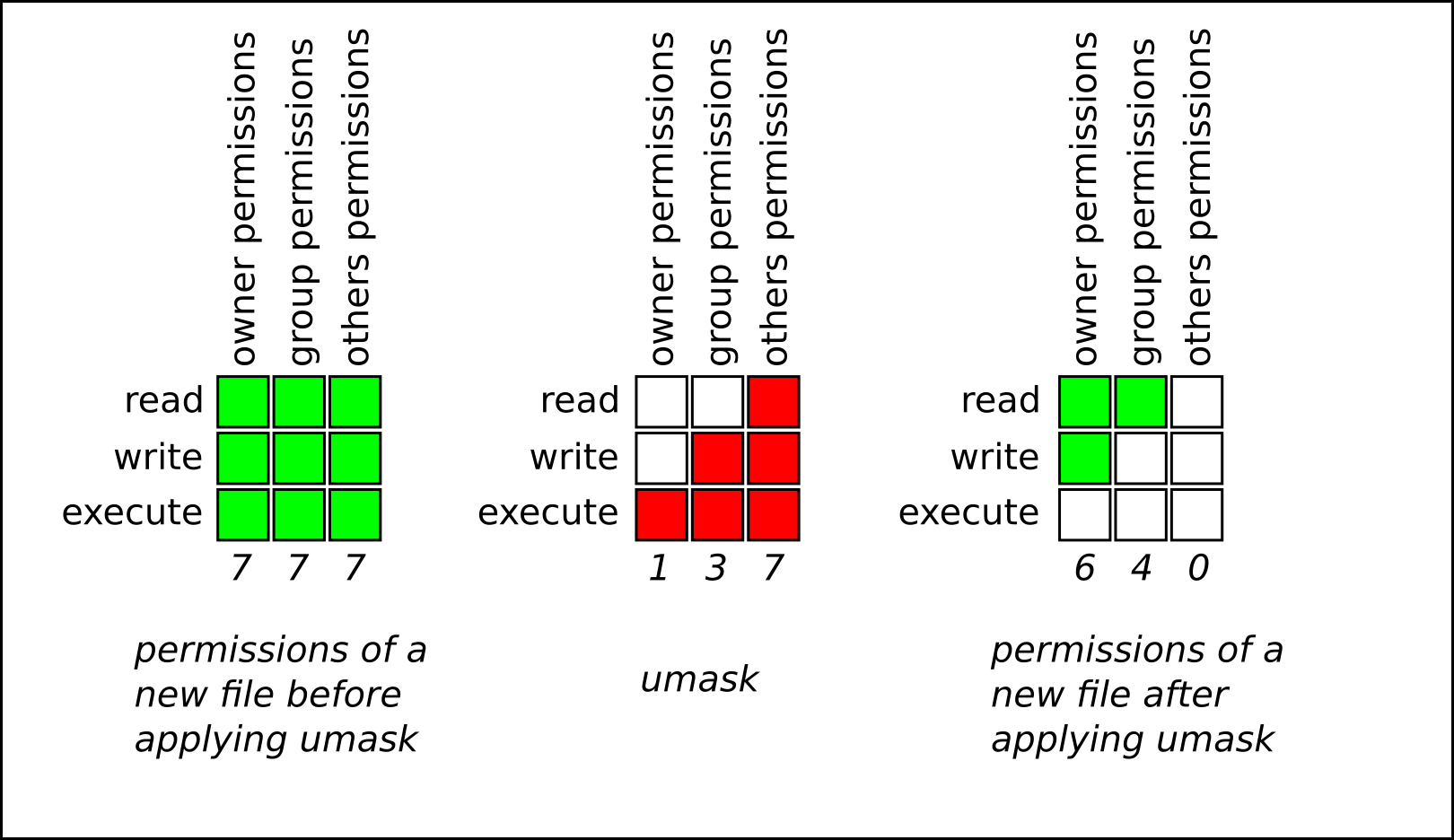

# yum update12.5.6. Automating software updates